Originally posted by Lowell Goudge

Originally posted by Lowell Goudge

First of all, there needs to be some simple guidelines set for exposure.

Specifically you need to ask everyone to keep the histogram centered because in the center the histogram is almost linear (in greyscale value) with Fstop or Exposure value.

Secondly, you need to have every one who takes images have the contrast setting of the camera calibrated. You do this by setting the camera to a middle F stop and then change the F stop and plot greyscale as a function of F-stop. I have found for example, just changing from maximum to minimum contrast (shooting jpegs of course) that the greyscale range per stop around the mean value of 128 can change by 20% i.e. in min contrast each stop is approximately greyscale change of 40 and at maximum contrast it is 50. People offering shots with different settings will provide you with errors due to settings.

Ideally, the exposures would be calibrated raw images and the histogram spike would be as close to the maximum as possible without clipping a channel. However, I'm not expecting perfection, just characterization good enough to drive a recognition and/or synthesis algorithm. The same goes for the contrast issues. Frankly, as soon as one uses JPEGs, it's all

very approximate anyway....

Originally posted by Lowell Goudge

Originally posted by Lowell Goudge

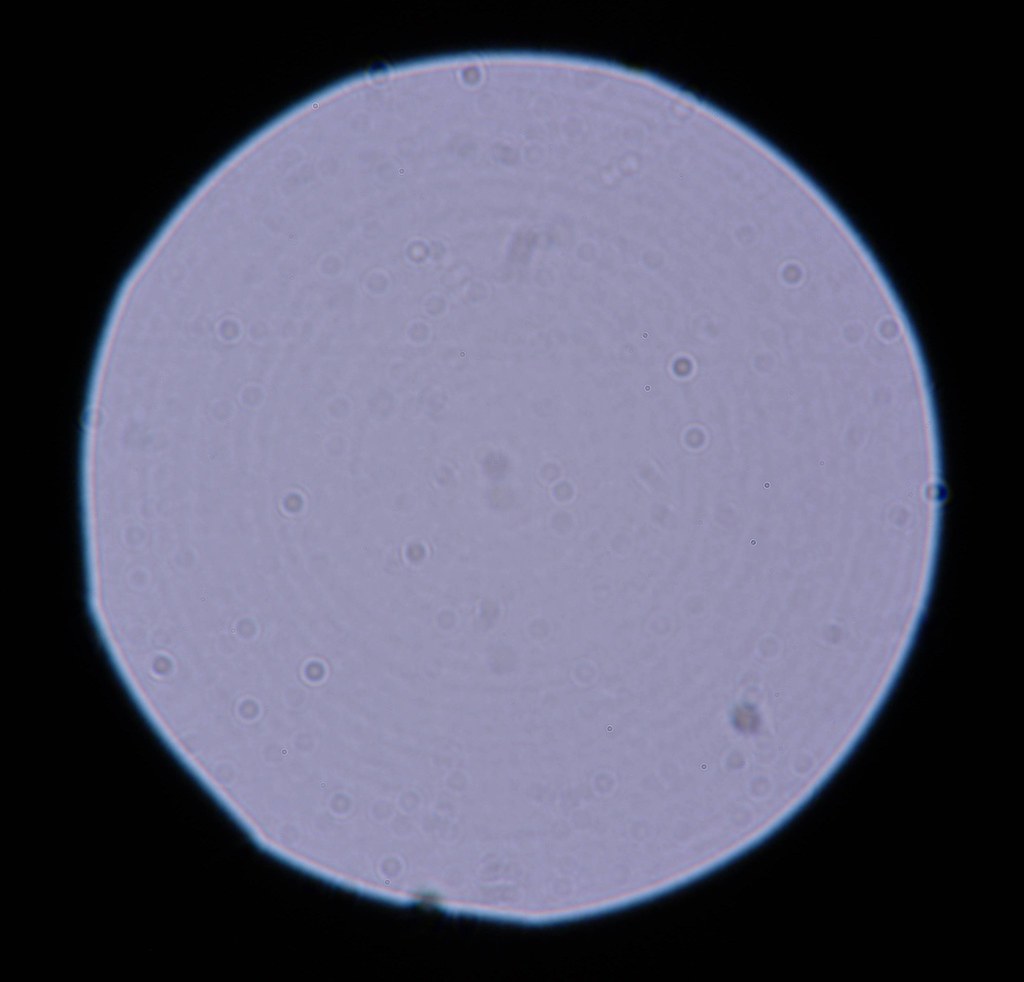

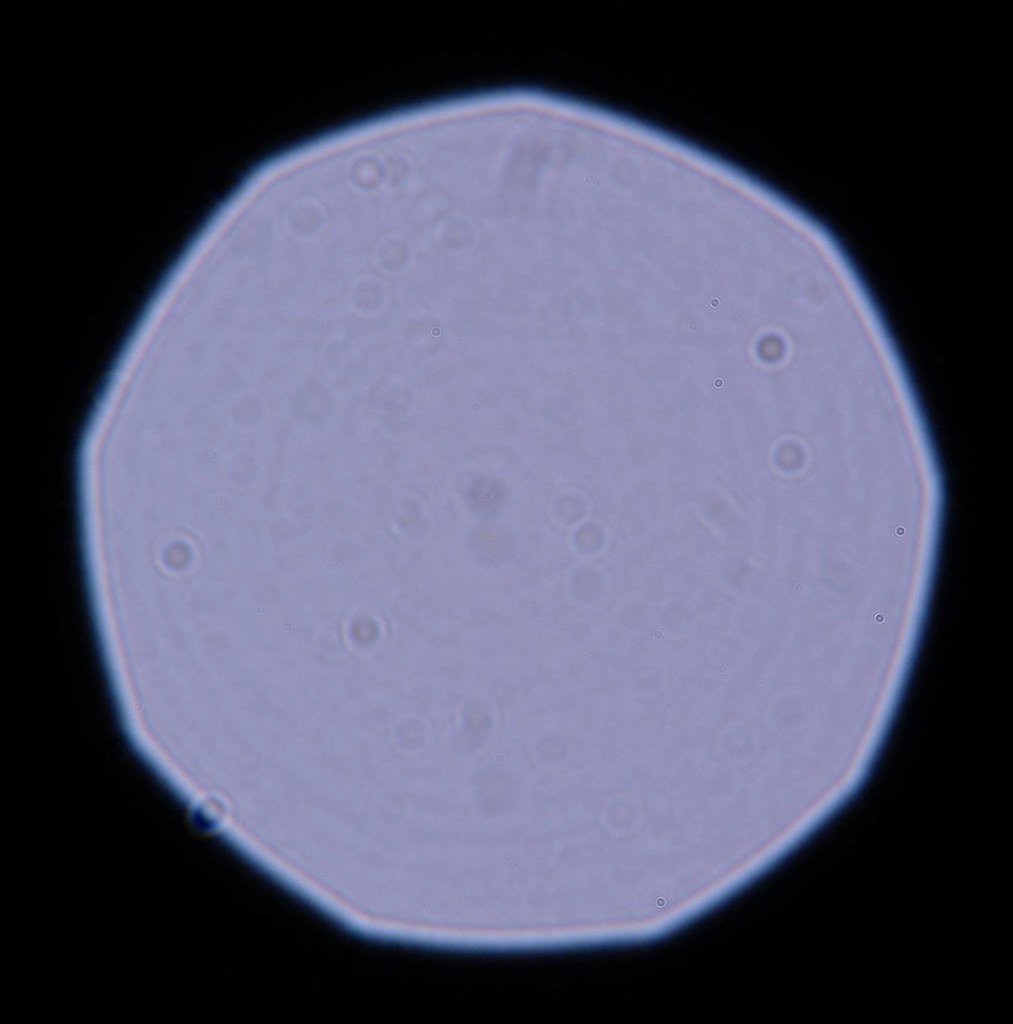

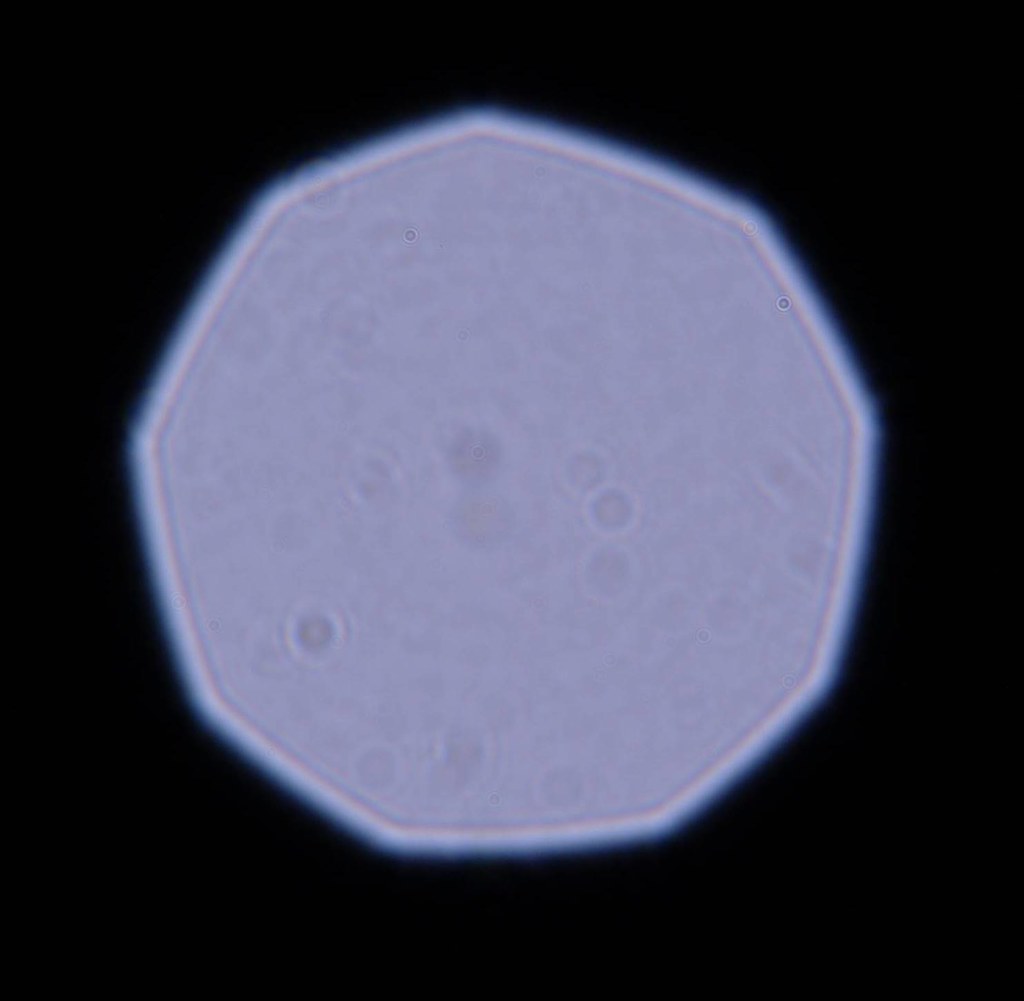

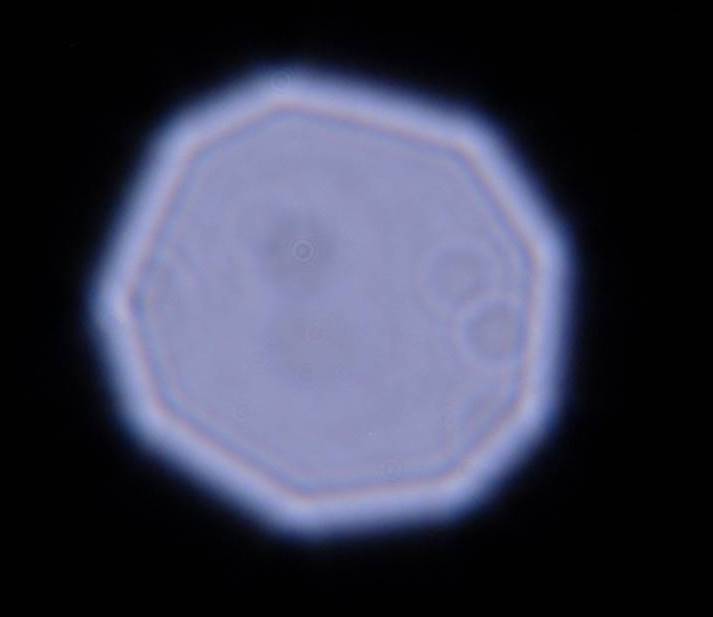

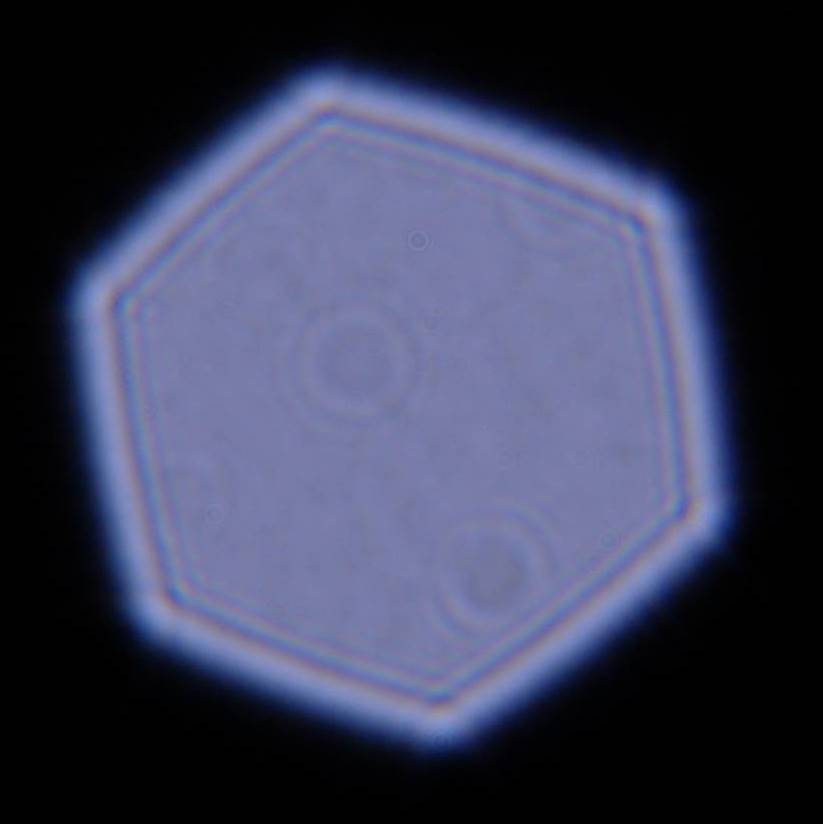

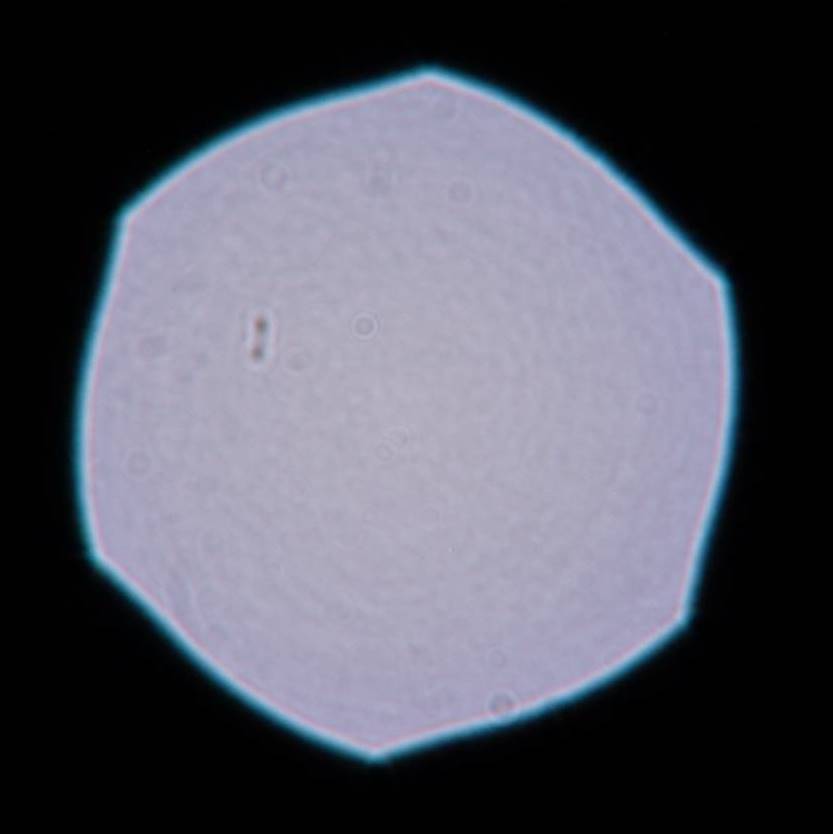

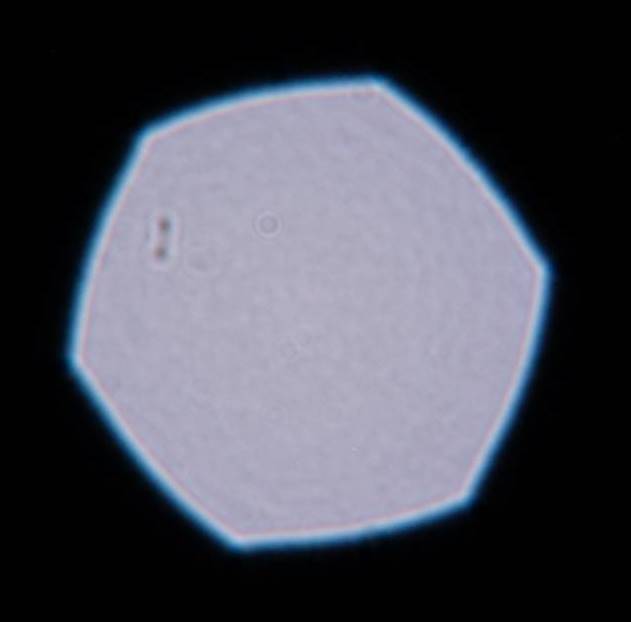

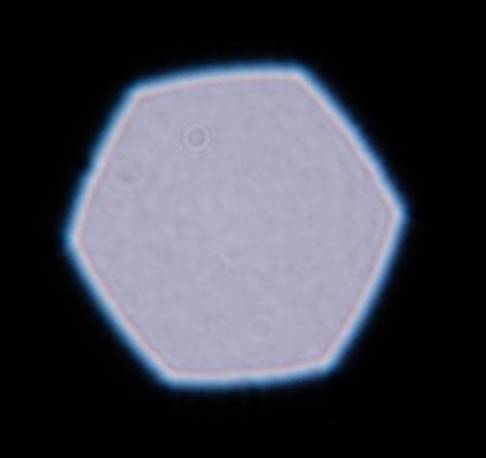

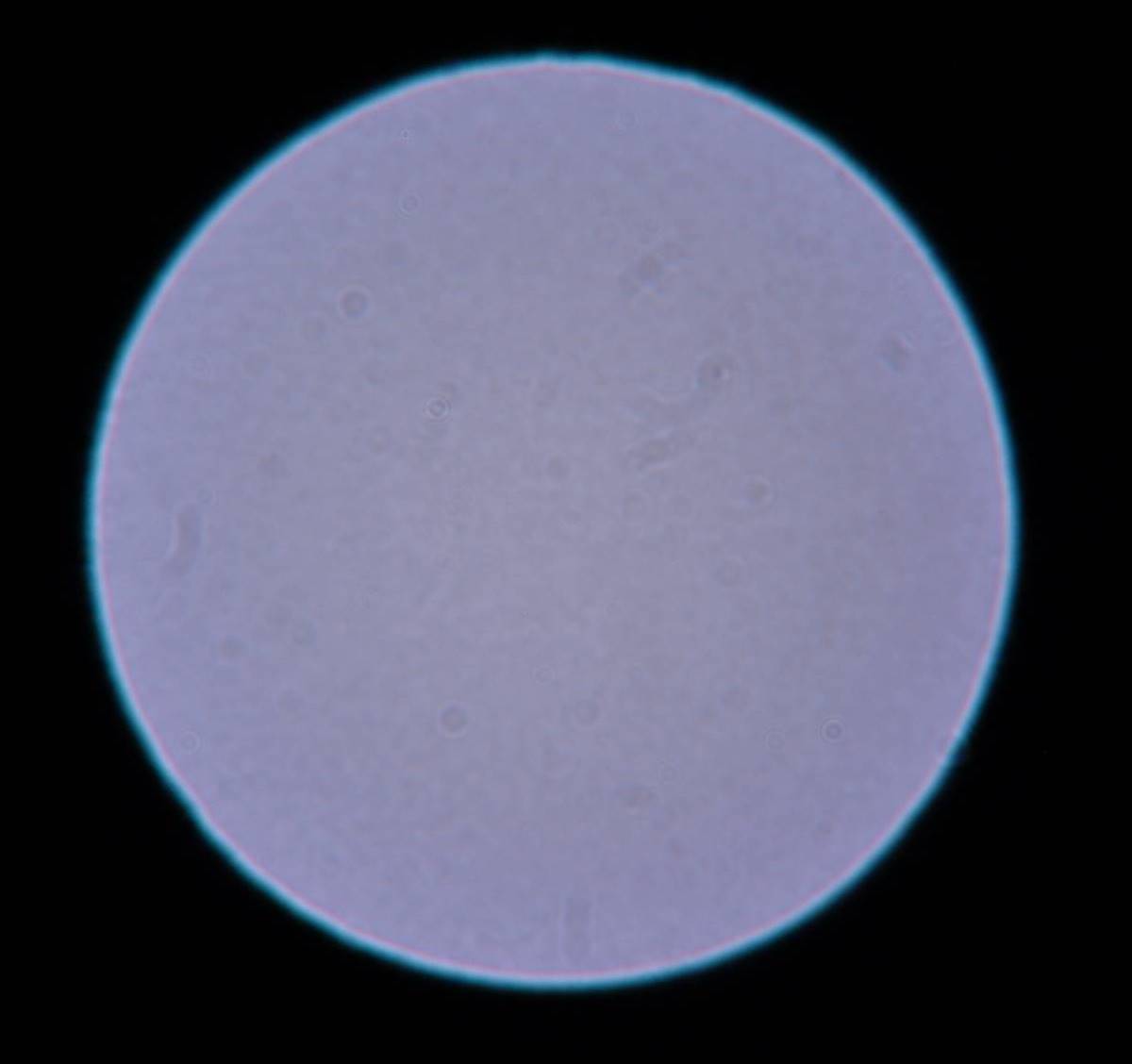

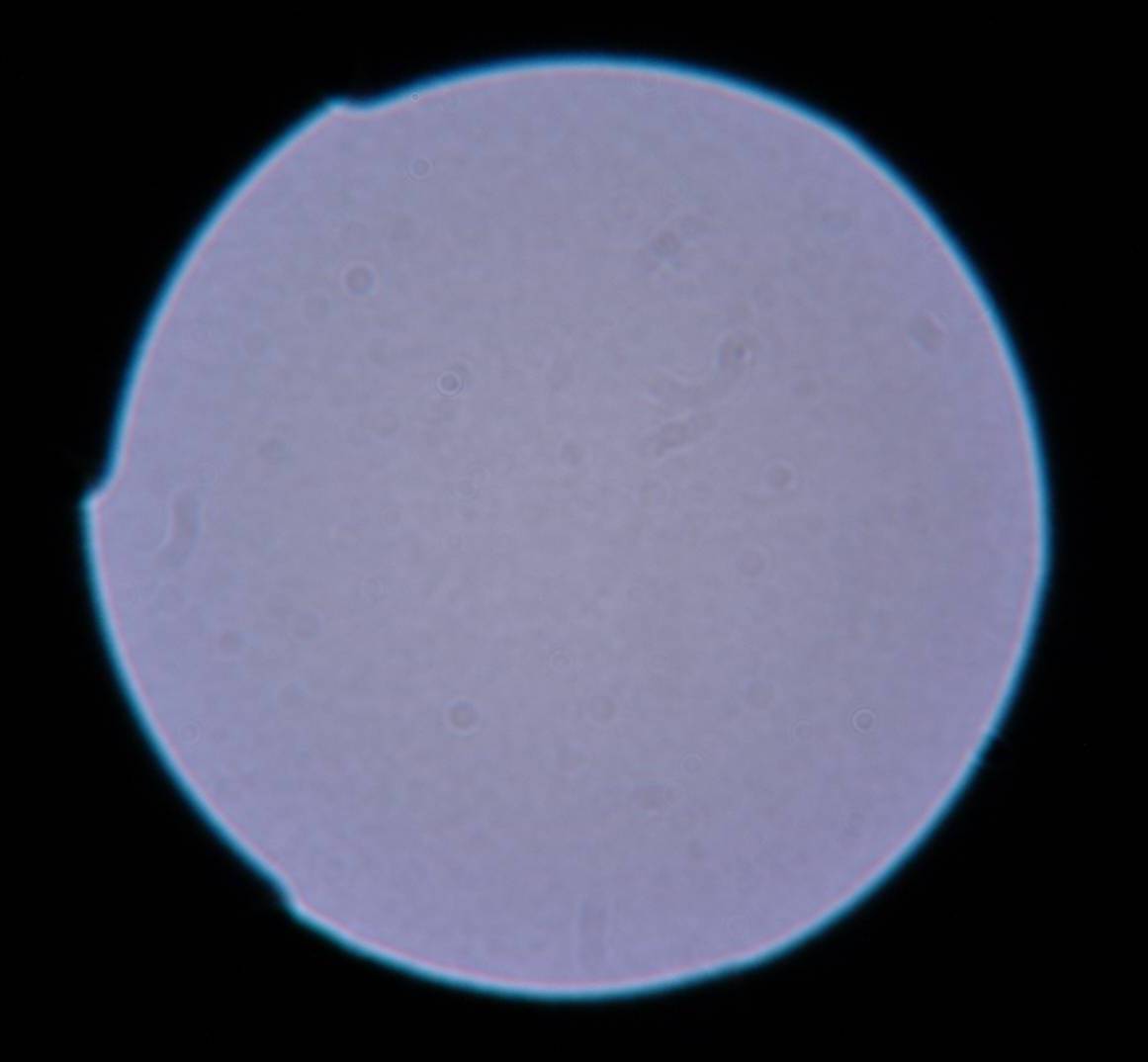

third, in looking at your image from the 50mm SMC tak lens seems to show non uniform illumination top and bottom and therefore may not be a point source as far as your camera is concerned. I find Matt's shots moch more uniform top to bottom and side to side. So, we need a more controlled definition of the light source or setup to be able for you to provide your conclusions.

Actually, I LOVE this little comparison because it is consistent with a guess I had made as to the cause. I knew it wasn't a light source defect because rotating the camera about the optical axis the pattern stays oriented with the sensor. In short, I believe the horizontal bias isn't a flaw in the light source, but a sensor reflection / mirror chamber masking artifact from my Sony A350 body -- different body, significantly different bokeh! The masking is probably the dominant effect, because Sony is quite aggressive about blocking stray light (i.e., from a full-frame lens). The 50mm f/1.4 Takumars seem to be exceptionally susceptible to this; for example, I don't see this artifact with my Opteka 85mm f/1.4.

Originally posted by Lowell Goudge

Originally posted by Lowell Goudge

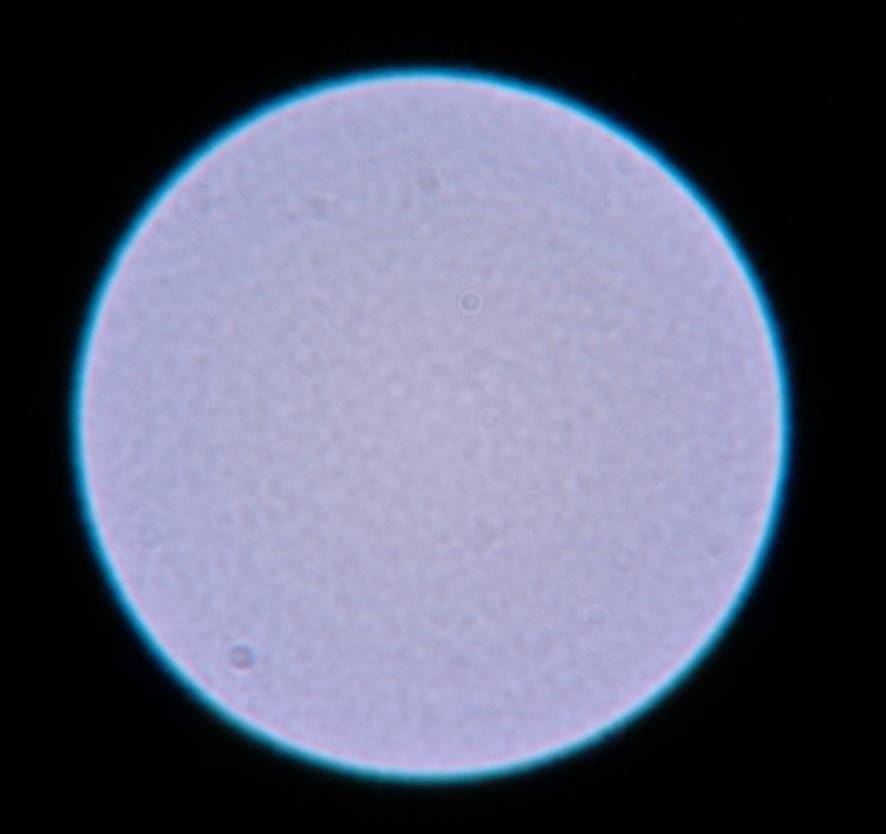

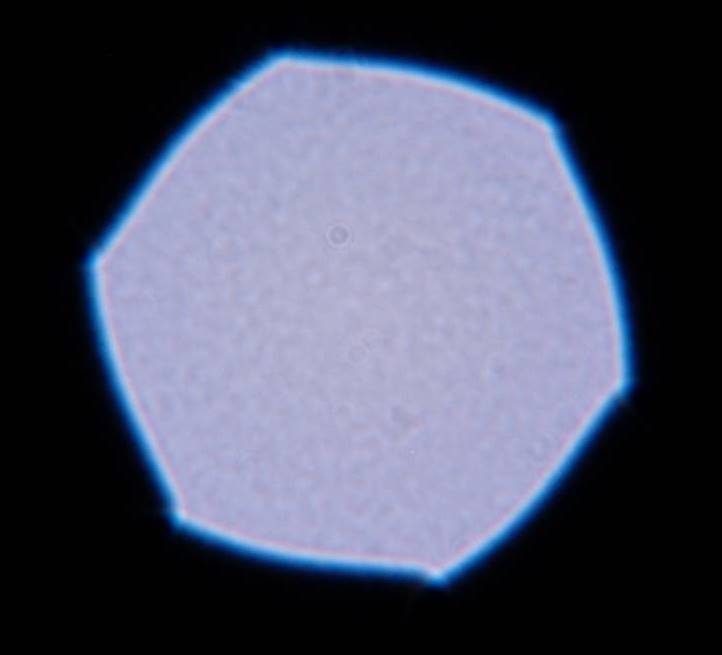

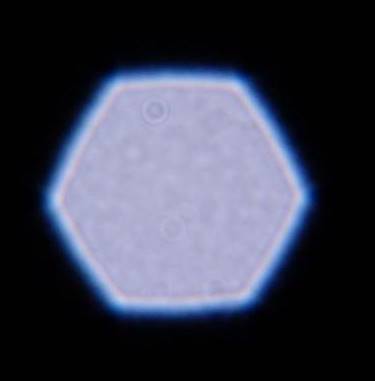

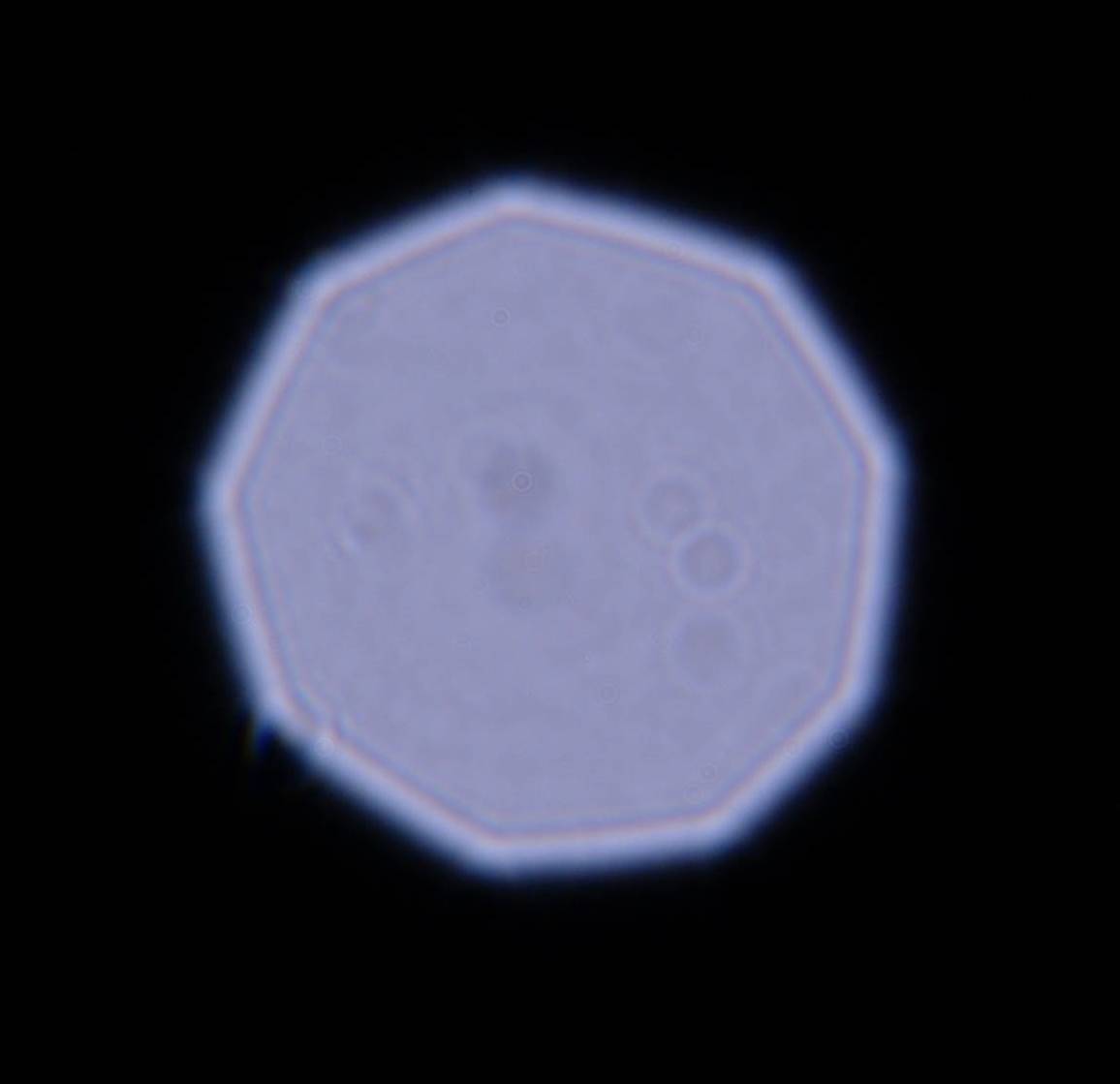

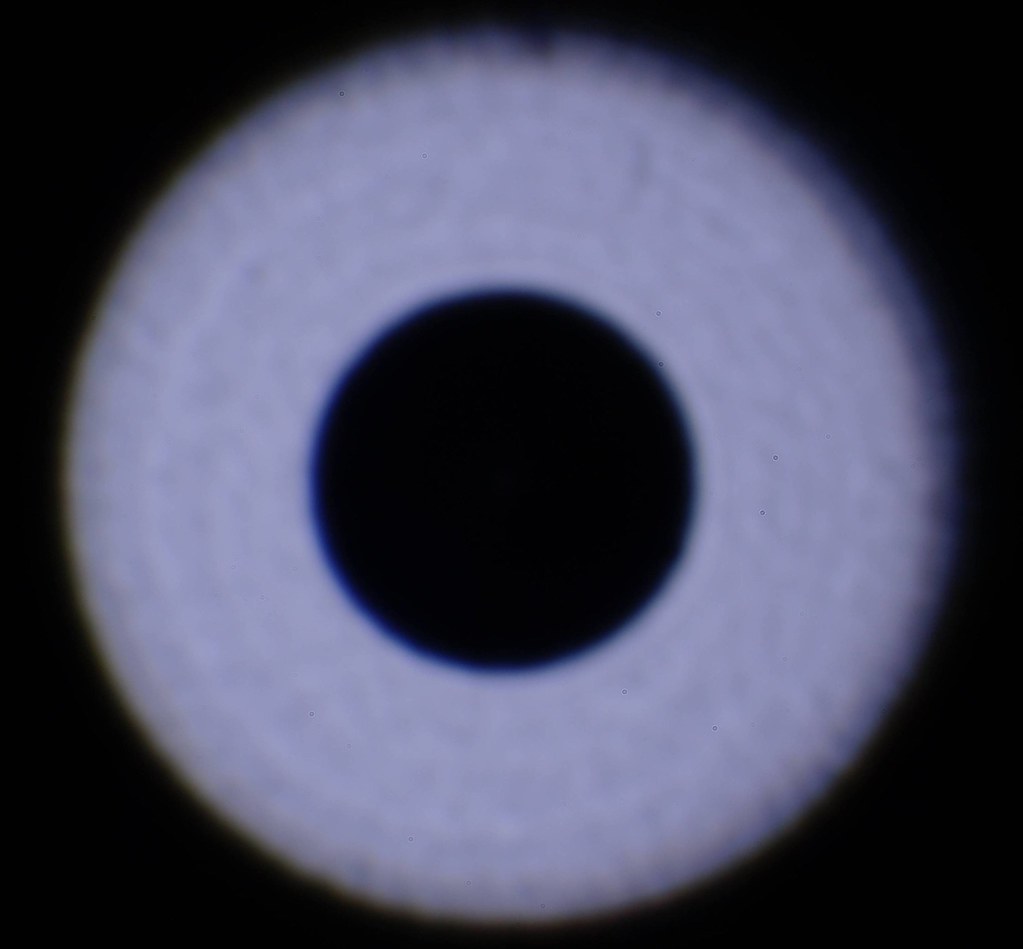

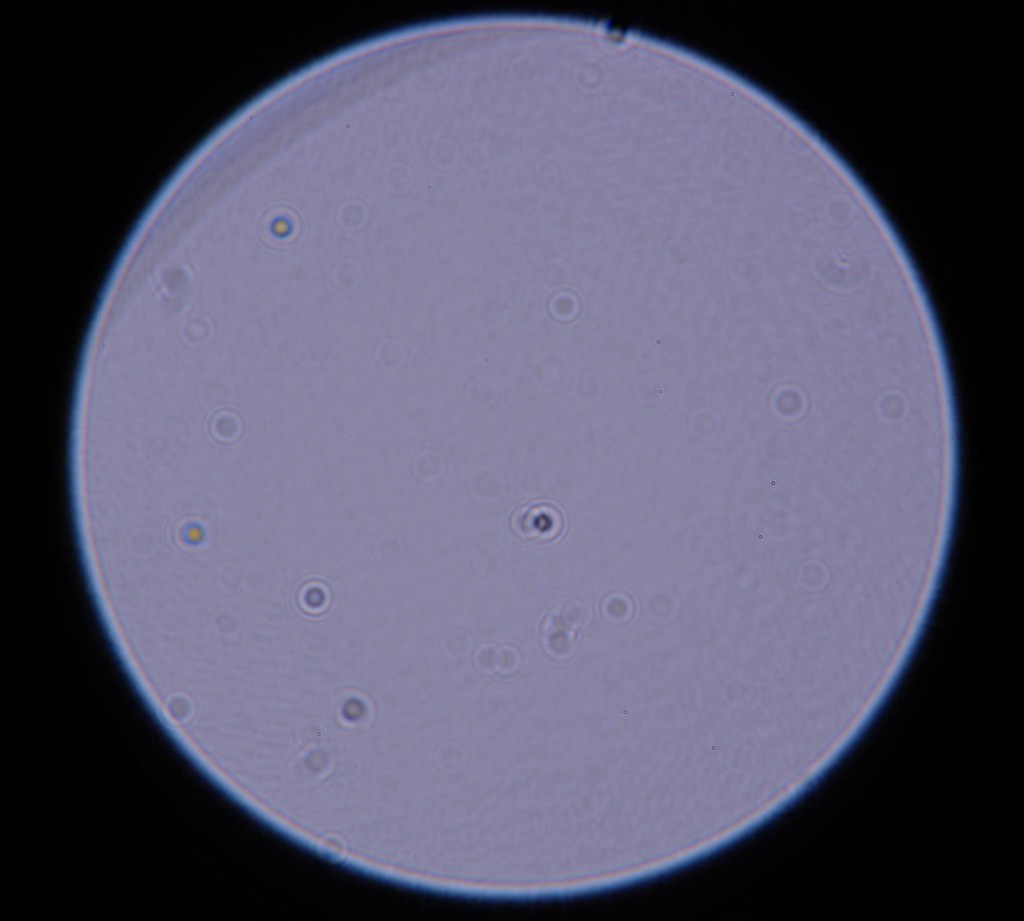

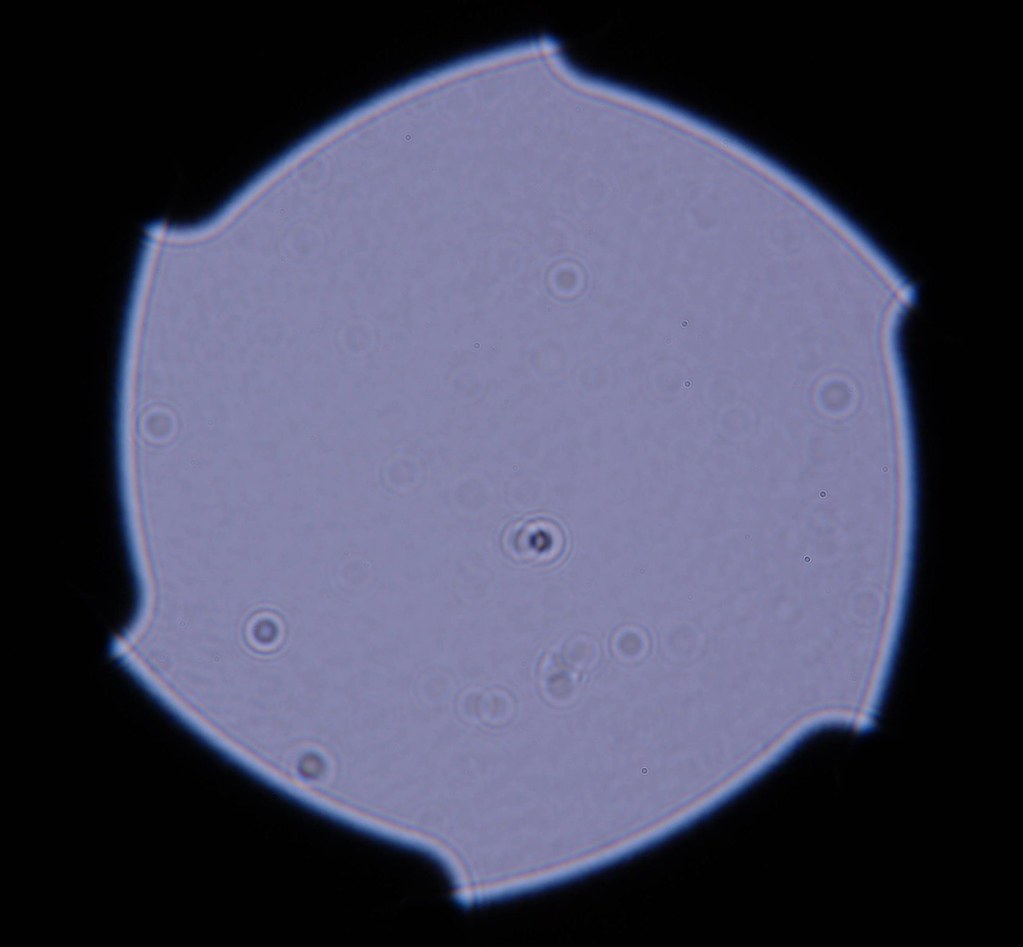

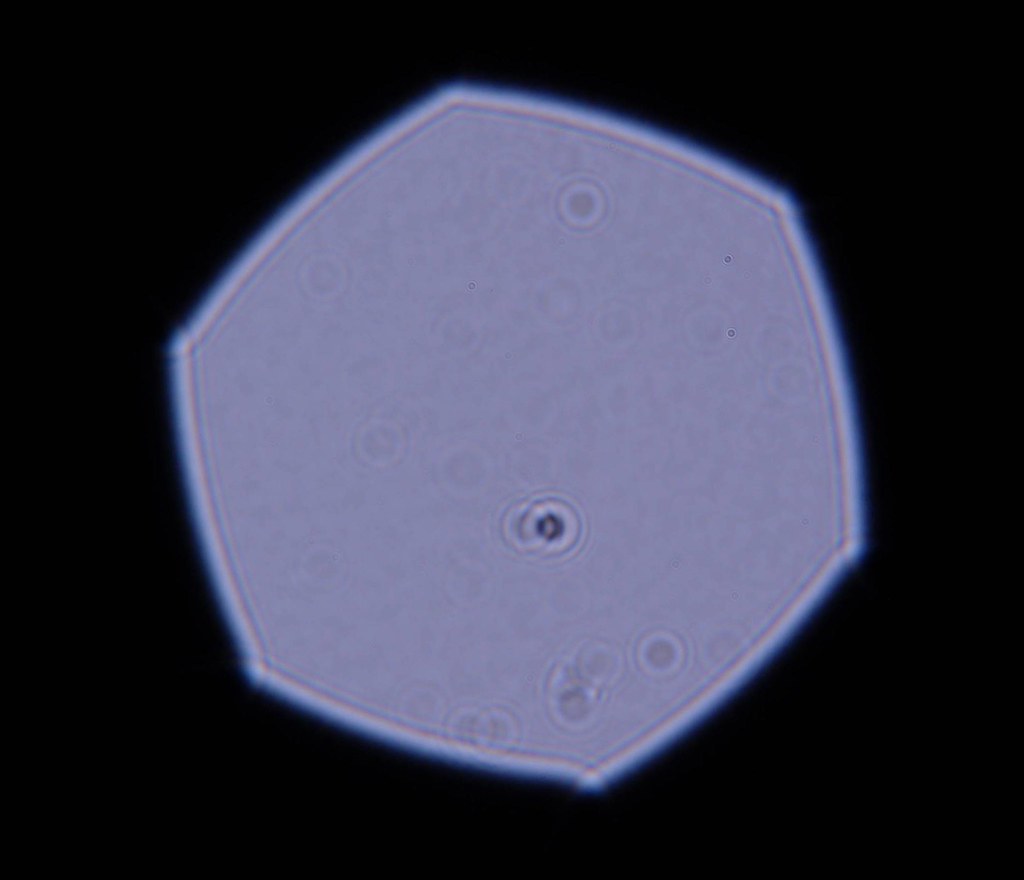

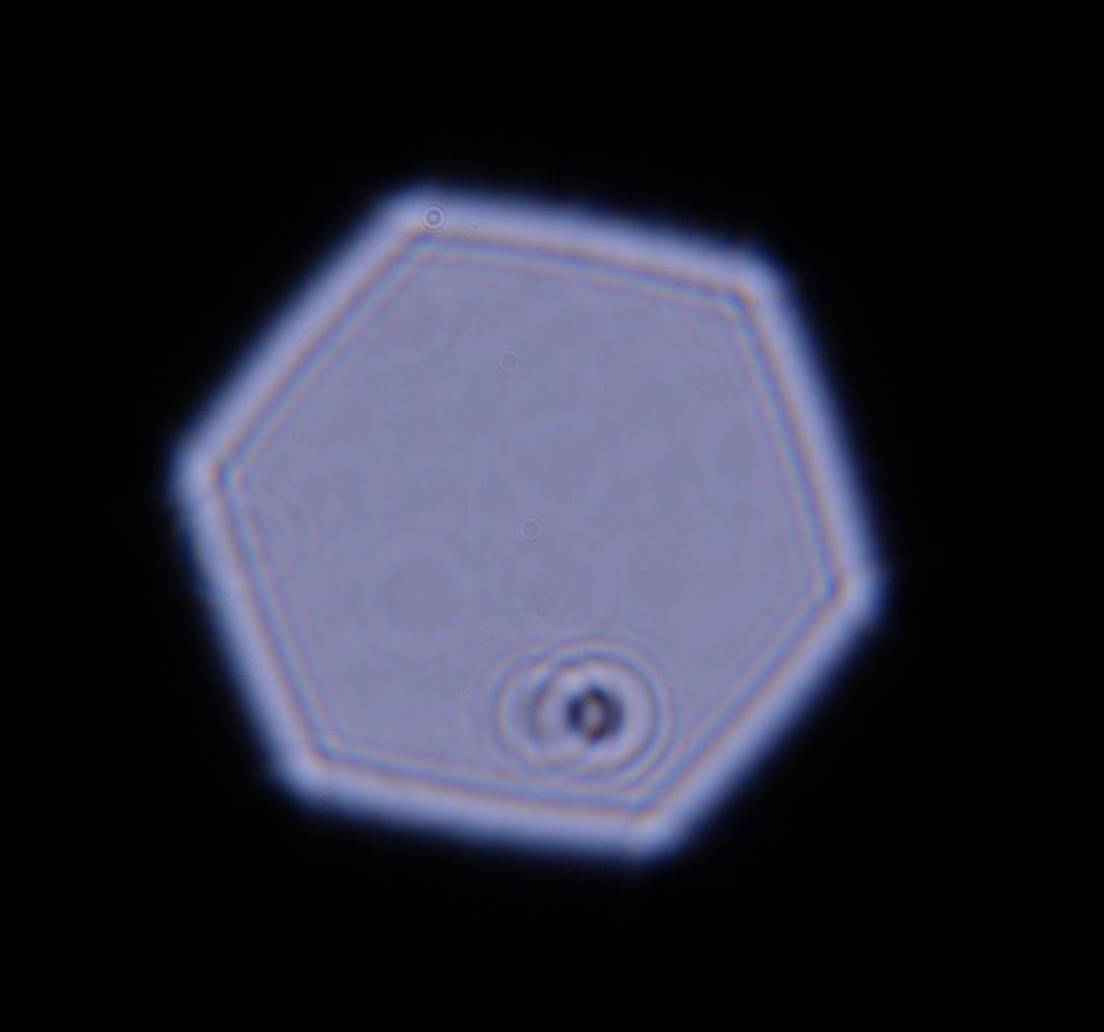

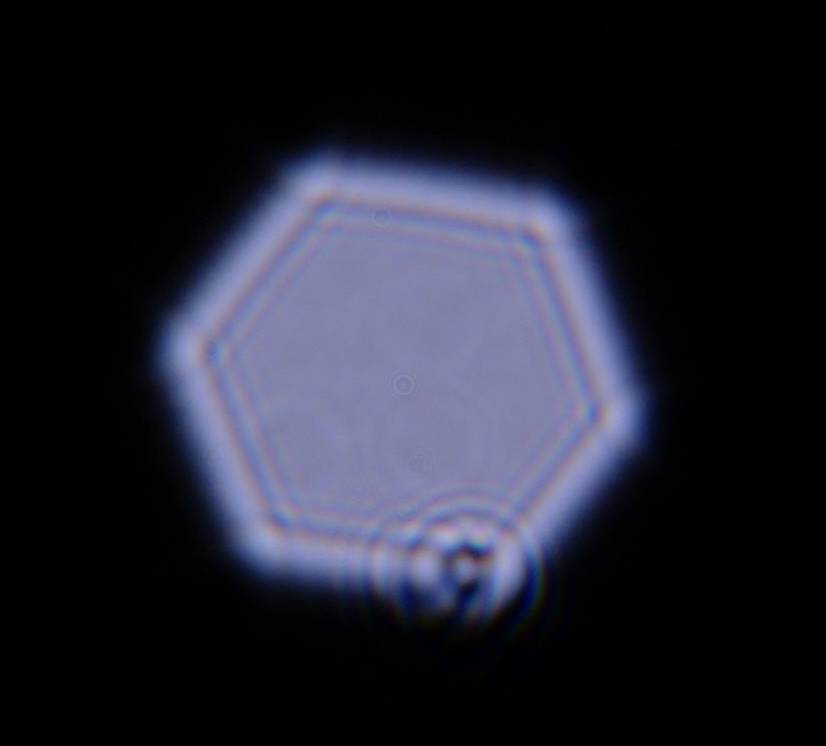

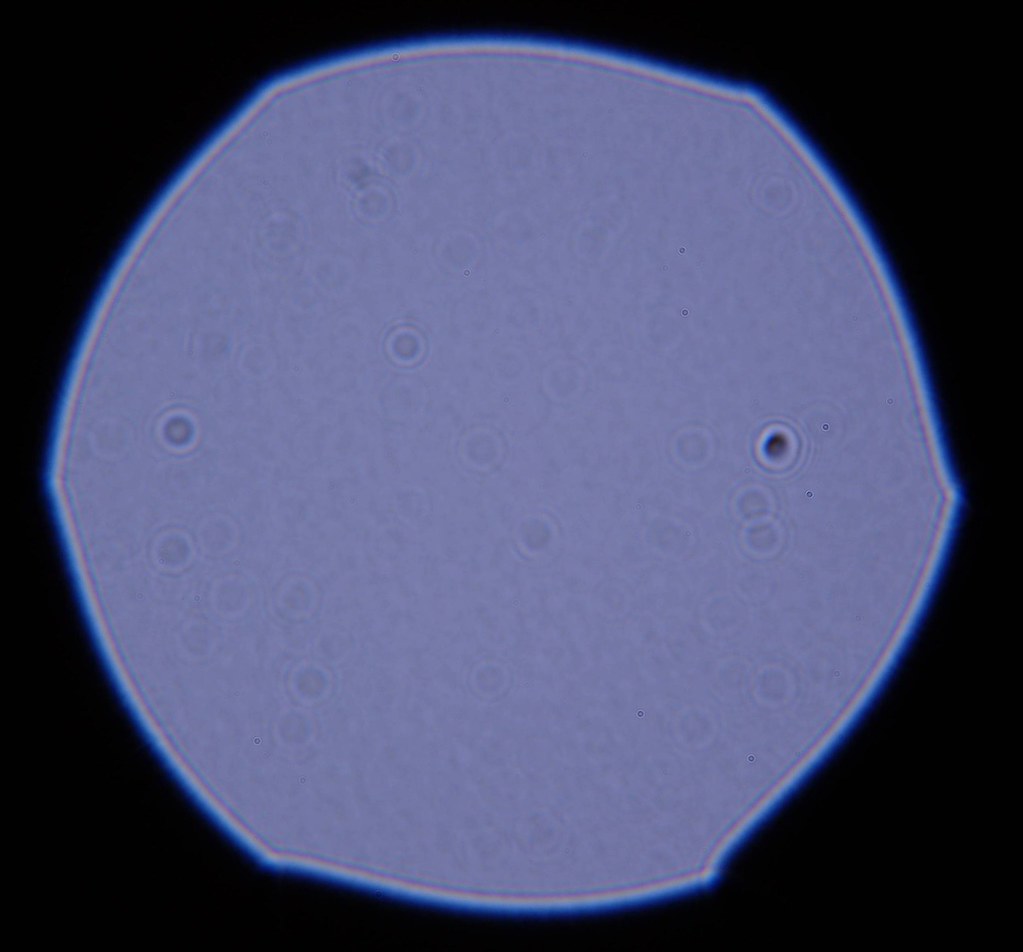

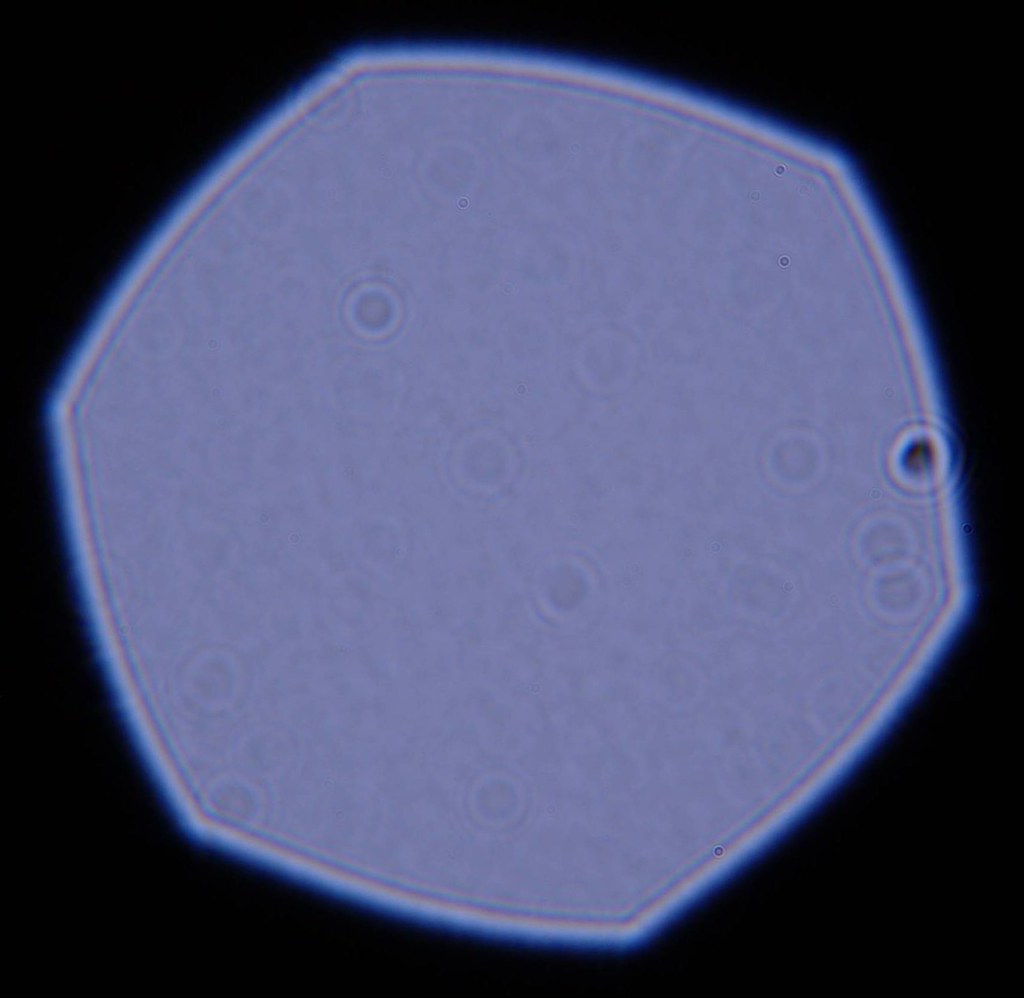

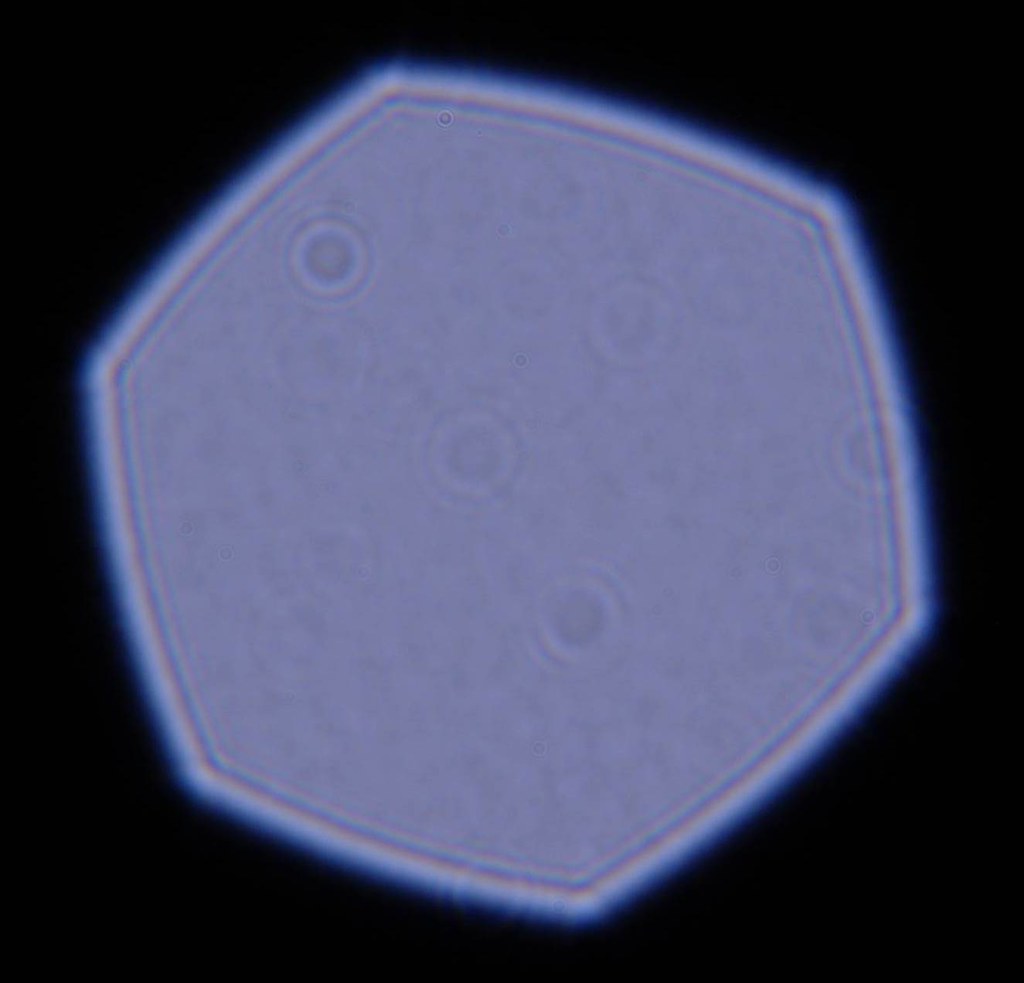

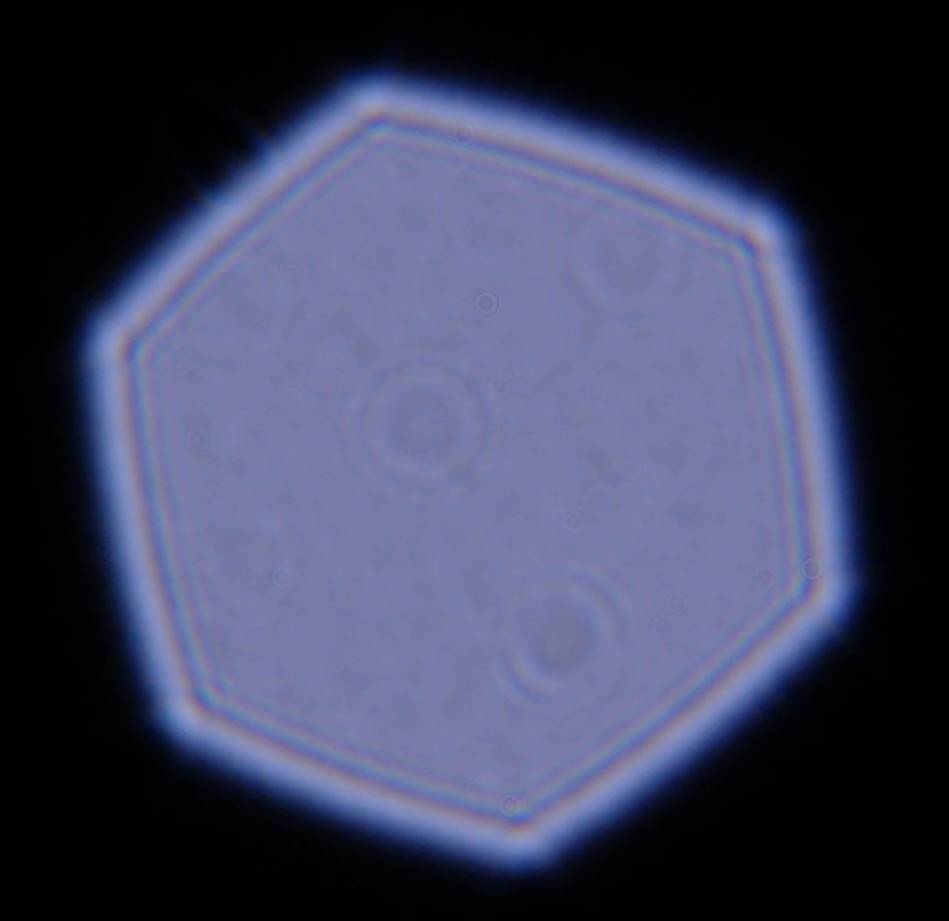

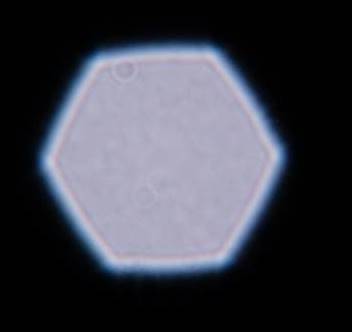

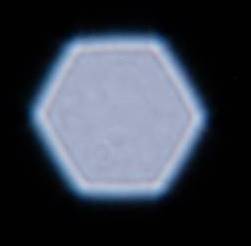

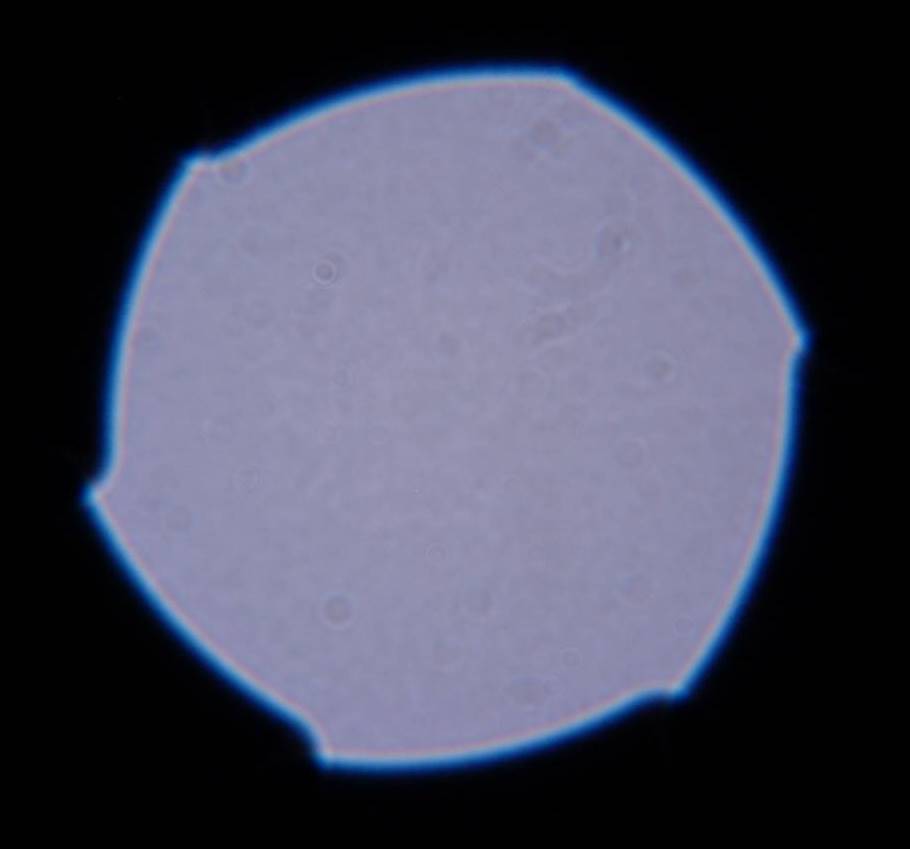

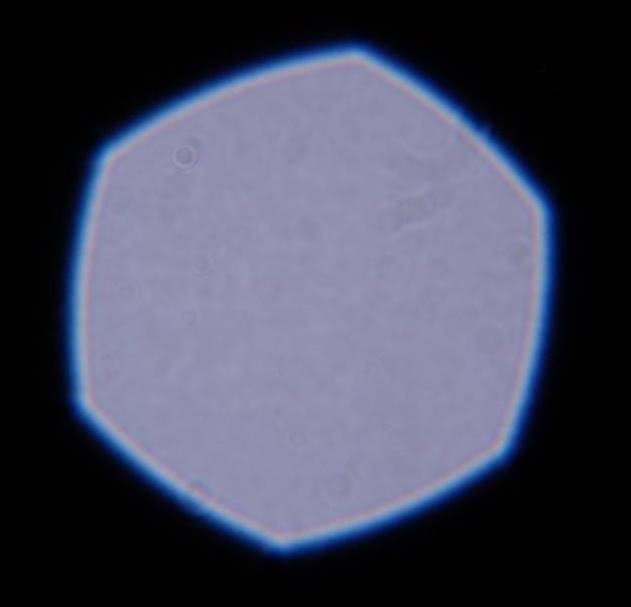

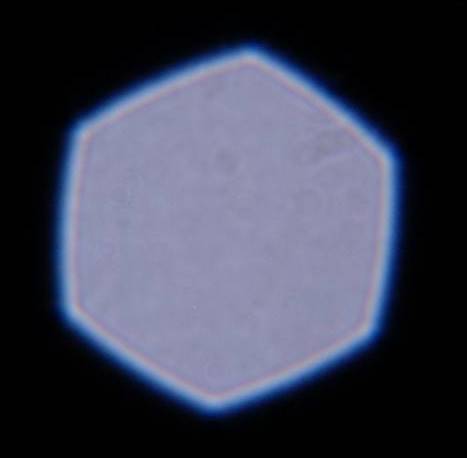

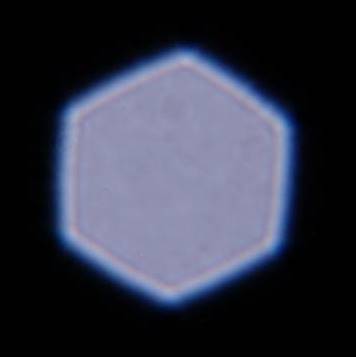

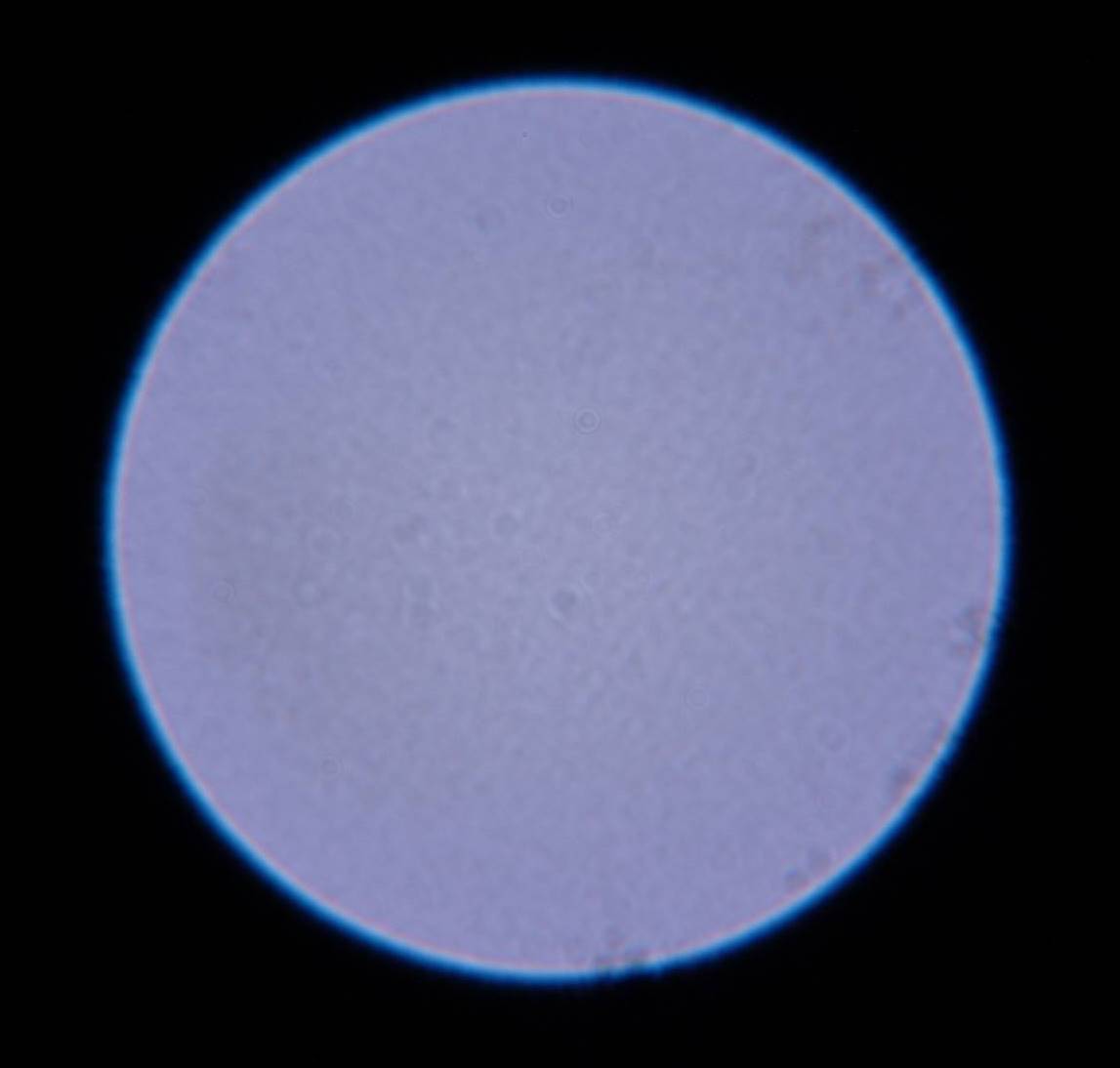

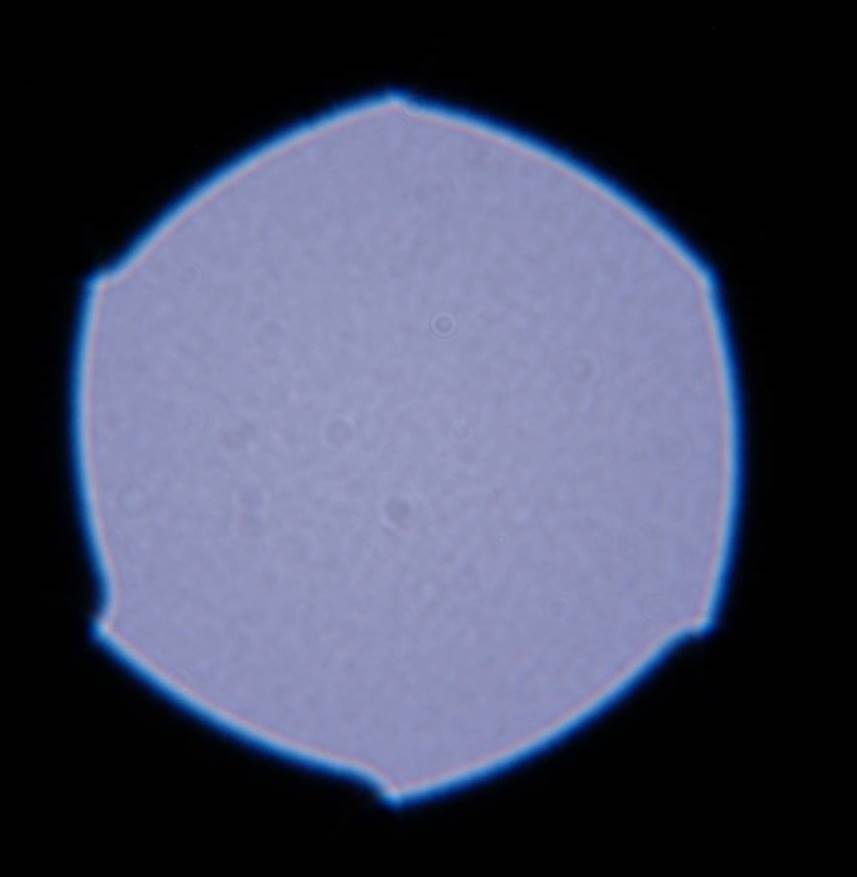

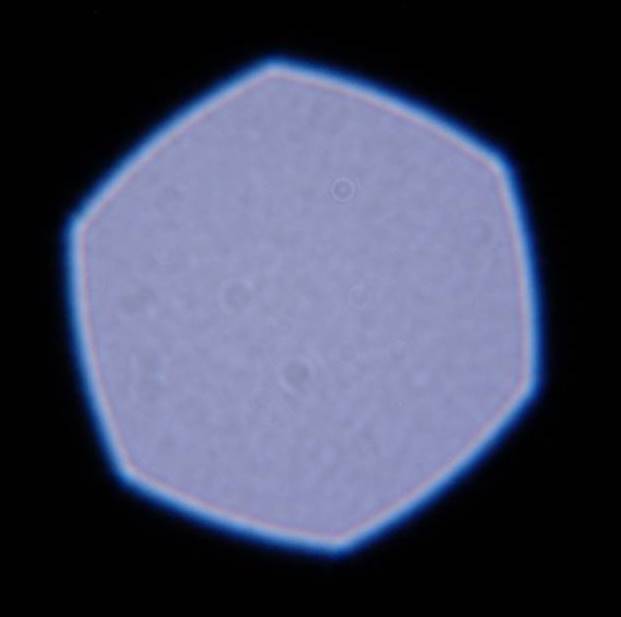

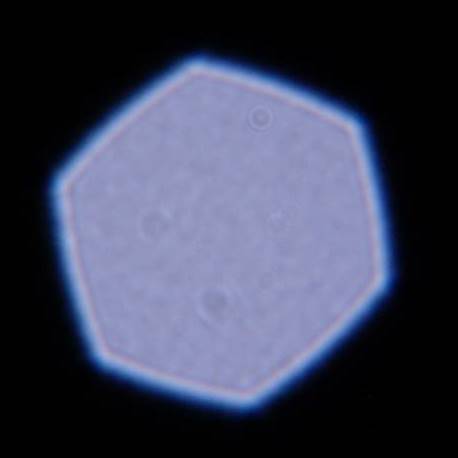

what is also interesting in some of matt's shots is the color shift of the fringing as you stop down, and the change in shape of the aperture where some lenses have pronounced points at certain apertures, some lenses have essentually round apertures, and others either hexagonal or optagonal as a function of blade count, as wellas the loss of symmetry of some aperatures as you stop down. This all will also have impacts on bokeh and the creation of artifacts like bright points creating starbursts. Can you give a little moe information on exactly how you will be evaluating bokeh?

I have been initially concentrating on wide open PSFs, however, the variations don't shock me. The little surprise for me is how evident diffraction effects are in some of the images... you don't see that much wide open.

As for exactly how I'm evaluating bokeh, it's pretty complicated, but here's how I got started.

I've been using CHDK to put various functions in cameras for research purposes for some time, and last year I supervised an undergrad senior project team at the University of Kentucky implementing depth map capture within an unmodified Canon PowerShot A620. The method used was simple depth-from-focus, capturing multiple images with different focus distances, determining which image was sharpest for each pixel location, and then creating a depth map image. It worked quite well -- where there was an edge or texture. Where there wasn't, it often hallucinated sharp edges at disturbingly incorrect distances. That's when I realized that the Gaussian blur model of defocus underlying all these algorithms was fundamentally and seriously wrong. So, last Summer I set out to develop a model of what lenses really do.

My initial models were mathematically perfect, noiseless, synthetic creations that I could use to test my matching algorithms. However, that doesn't tell me what real lenses do, nor what variations I can expect due to noise, JPEG processing, etc. That's what I've been testing lenses to determine... so far, I've measured PSFs for more than 3 dozen lenses on my Sony A350.... I've also tested quite a few compact digital cameras (most have small, but distinctive, PSFs).

My current best matching method involves a rather expensive Genetic Algorithm (GA), but it works quite well on perfect test images given enough time. The question is how well it can work with real lens PSFs, noise, etc. Using this approach, high quality depth-from-defocus should be possible from a single image... and then there are a bunch of other cool things one can do.

Here's a rather crude example of the kinds of other things one can do -- removal of obnoxious bokeh, a sample image pair from my "Technology Enabling Art" challenge series at dpreview:

http://c.img-dpreview.com/0205120-01.jpg

Similar Threads

Similar Threads

, yeah, sorry - I wanted to do this, but I couldn't get to it before I forgot, and then I forgot... I do also have a technical constraint - distance... You mention 10m, but the most I can get in my house would be about 4m or so (just eyeballing it...). Is that distance enough to get you data (I'm not too inclined to go out at night to do this

, yeah, sorry - I wanted to do this, but I couldn't get to it before I forgot, and then I forgot... I do also have a technical constraint - distance... You mention 10m, but the most I can get in my house would be about 4m or so (just eyeballing it...). Is that distance enough to get you data (I'm not too inclined to go out at night to do this  )? If so I will try to do some shooting for you.

)? If so I will try to do some shooting for you.