Thomas,

I did some testing of a K10D yesterday to see what happens with the black point and histogram at the 1, 5, 10, 15, and 30 second durations with manual and bulb exposures. No strict temperature controls applied at the time, it was just at ambient and didn't make the camera reach thermal equilibrium. Still, the temperatures are mostly stable because the durations were short. At 100 ISO, the blackpoints are all 0. The mean is uniformly .7 to .8 ADU. Max was due to hot pixels and min was due to dead pixels.

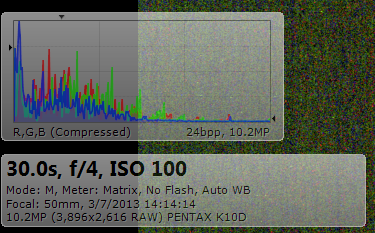

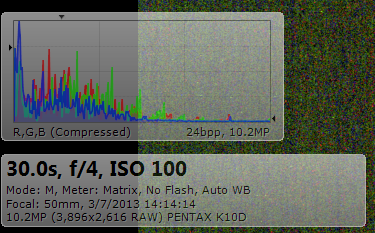

When stretching the histogram to show the full range, it looks more like your second example - a truncated curve with a tail leading off to the left with a spike.

The background bias noise in the above example has been stretched to show the histogram. The histogram is generated in the above view by averaging the 3x3 area around the mouse pointer which is placed at the center of the image.

This view above is the same sub with a 300% view. This shows the density of the bias noise. Note the location of the hot pixels as large blocks of color. Also note the lack of black pixels - maybe this is a view of properly non-truncated data?

I do see bias in the darks Thomas has posted (and the ones I generated of short duration), so it's the prominent source of noise as the vertical pattern suggests. The ring of glow around the edges in the K5IIs example looks like classic amp glow and matches what I saw in a dark that I've seen on a K5.

To answer the question of why did the engineers do this, I'd point to what Craig Stark says in his conclusion in the Canon study. It's to optimize the performance of the camera for the common daylight use. There is some tradeoff of dark signal being lost, maybe it's not a full stop like Stark found, but it is a sacrifice. The question is still raised, why is it not scaled under common exposures (less than 10 seconds) and then turned on by default at anything above? Thomas is right, this seems to be a reverse of what would be desired. Again, my guess is that the engineers may be thinking less about saving the dim data and more about saving headroom for highlights.

What's the remedy? A firmware update would be nice that gives a toggle to reset black point - that would be ideal. Maybe Pentax will give us this control if they did, bravo, if not, we may have to use workarounds of tricking the camera and standardizing on this behavior.

In practice, it seems that if the camera's performance is profiled at a particular exposure duration and ISO setting, a map of noise across a range of temperatures would generate a usable reduction master. As these cameras are not temperature regulated, we have to depend on the EXIF temp reading and matching darks to lights to develop a profile. In my experience, this linking of temperatures needs to be within 2 C to be usable. Anything more does not scale properly.

Once we decide on the setting that works for the conditions used (for me, I've said it's 1200 second subs at 100 ISO) then all the rest of the efforts revolve around that point. Mount tuning, alignment, library management, and power supply are all part of the effort to support this choice. Maybe these long durations are enough to get the heat signal out of the murk. Maybe not as even a 1200 second 29C sub I referenced had a black point of 0 and mean of 3 (median of 0).

Similar Threads

Similar Threads

! (I started using Linux in 1992 - just for the courious.)

! (I started using Linux in 1992 - just for the courious.) (

(

Post #38 by ewelot

Post #38 by ewelot