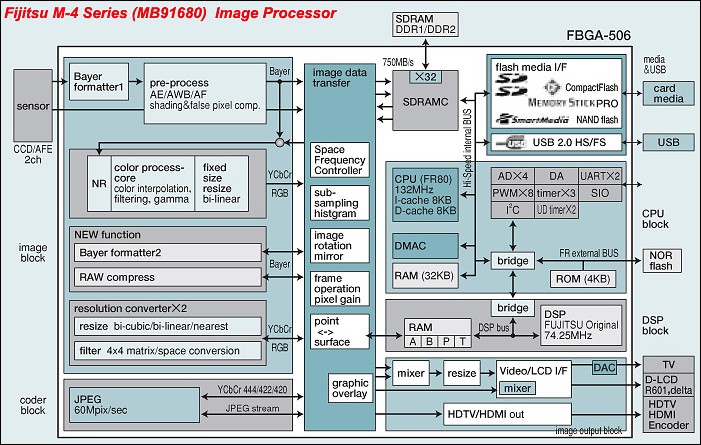

Anvh, I think you're discounting the involvement of the buffer in the overall performance of the image processor. I also think you're misunderstanding the block diagram you posted. In that block diagram, each block does not represent a "core" or a "chip" or a "processor", it represents a function and the block diagram is meant to show the flow of data and transformation.

We know some architecture changes have taken place in the Prime processor, as that block diagram is from an M-4, Prime II uses a M-5, and Prime M must use an M-6, because it is the first to include a hardware h.264 processor.

In that diagram, a JPEG gets processed this way:

1. It comes from the sensor into the large blue block on the top-left. It gets converted from analog signals to digital bits.

2. Any of the functions (AE, AWB, etc) are performed on the RAW data

3. It goes into the Image Data transfer block, where it might get shuffled back in to be rescaled, like if you select a 6mp output file, etc

4. It goes into the JPEG processing block where it gets processed according to the settings in the menu.

5. It goes back into the Image Data Transfer block, and from there to the screen, and to the SDRAM Controller

6. It goes into the buffer, and gets written out to the disk.

All these blocks are partially hardware, partially software paths through the same sets of chips.

Did you notice the arrow that goes directly from the Sensor, and goes directly to the Image Data Transfer block? That's the path that RAW takes. It goes back into the big blue block if you have RAW compression on, resizing, etc, and then it goes into the RAM buffer. If you have all transformations to the RAW turned off, it's a much faster path.

So the question is, what is the bottleneck? This M-4 you posted is running at 132MHz. Even in this 2007-era chip, the system had 750MB/s speed access to the SDRAM controller.

Now look at

the M-6. It runs at double the clock speed, uses a faster ram controller (DDR3 vs probably DDR2 in the Prime II-equipped cameras), and has a dedicated hardware processor to handle h.264 and video. There's no quoted speed between the Analog-digital converter and the RAM controller, but we can assume it's much faster than 750MB/s.

Now let's consider a maximum-speed, maximum number of frames burst from each camera:

Prime II - K-r (~16MB RAW file): 2 Sec @ 6fps = 12*16MB = 192MB of data, 96MB/s

Prime II - K-5 (~26MB RAW file): 2 Sec @ 7fps = 14*28 = 392MB of data, 196MB/s

Prime II - K-5 After FW Upgrade: 3.5 Sec @ 7fps = 24 * 28 = 672MB of data, 196MB/s << Updated with info from other thread

Prime M - K-30 (~22MB RAW file): 1.66 Sec @ 6fps = 10*22 = 220MB of data, 132MB/s

(10 shots at 6fps is 1.66 sec)

Clearly there are either some artificial limits in place, or the amount and speed of the RAM being used in the camera's buffer varies and is the limiting factor, especially when you consider the disparity between the K-r and the K-5, which use ostensibly the same processor.

The other thing to remember is that Pentax takes a Milbeaut chip, designs firmware that uses it, and that constitutes the Prime engine. What that software does with the chip, and how efficiently it uses its resources, will determine speed for everything - disk writes, buffer writes, UI interaction, etc. Features like Focus peaking, Saving the last RAW when shooting JPG, etc all take cpu cycles and RAM, sometimes even when you're not using them.

When they design a system like that, they don't set out to make the RAW processing better or worse. There's no spec document at Pentax HQ with these bullets:

Prime M

- Much faster JPEG performance

- h.264

- Worse RAW performance

- Worse battery life

Instead, they take the hardware design and resources that have been decided on (chip being used, available ROM, available RAM, buffer size, sensor interface speed, shutter recycle time, mirror and other mechanical capabilities), and they design a system that will use these resources as efficiently as they can. When they're finished, they tune it for more speed, and when it's competely polished, the results are what they are. You can set targets when you start a project like this, but there's always the pull between features, reliability, and speed.

The software designers who write the firmware receive their specs and mandates from a number of sources:

The product design committee wants lots of new features

The finance department wants to use less and cheaper ram

The hardware engineers want to underclock the processor for more battery life

The execs want to have at least as fast burst as the last generation

Next it's a balancing act to make all those sources are reasonably satisfied, while delivering a good experience to the user. That's why we programmers get paid big bucks!

(I wish)

Bottom line is we need to wait and see. I don't do a lot of burst shooting, but I'd rather go with a camera whose hardware is better, enhancements for which can be done via firmware update over time. It wouldn't surprise me if they bumped up the number of shots to 12 or more before the release, because those software engineers are working triple shifts right now, to be sure.

Edit: As shown above, note that after a firmware update, Pentax was able to increase the number of RAW images taken, but not the FPS. It had the same data rate, but was able to sustain it for longer, likely by supporting faster transfers to the SD card based on availability of faster cards.

I get the 14-bit vs 12-bit thing, too. But honestly, if you have to apply a +100 brightness to see the difference, that's okay with me - I don't tend to need those kinds of extreme adjustments in my shots.

Last edited by Ryan Trevisol; 06-14-2012 at 12:25 PM.

Similar Threads

Similar Threads

(I wish)

(I wish)

Post #203 by frank

Post #203 by frank