Originally posted by falconeye

Originally posted by falconeye

Evening Falk,

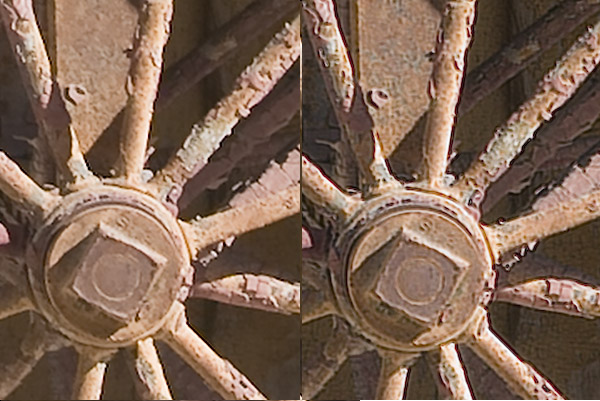

I do agree with your assessment. Photoacute has some excellent data and analysis showing any where from 1.3 to about 1.8x improvement. However, their analysis is only for stacking images, pixel on top of pixel (essentially HDR, noise reduction). The main difference I see here is the application of a sensor shift to fill in the spaces between the pixels, which brings in new and additional information. Photoacute does not address pixel shifting. This should actually add to the base raw resolution - beyond that of just stacking pixels. This is a capability that has not really been available nominal camera users up until now.

In doing a bit of literature searching - a number of papers up through about 2010, was showing a 1.3 to 1.5x improvement in resolution. These papers tended to look at single row sensors using pixel shifting, which appear to impose limitations. I am sure that there are other papers based on sensor movement, I just did not come across them. Then after 2010, papers started to use whole sensor shifting. One paper in particular that took the approach of effectively shifting the entire sensor array (at least that is my interpretation), and evaluated various pixel positioning strategies. This one paper went as far as applying it to an older Canon Rebel (CCD) to confirm their findings of 4x and 8x increase in resolution. The 4x tends to align with what Olympus is claiming.

There are a number of industrial cameras that utilize this approach. One manufacturer, claims their 1280 × 1024-pixel array of a 1/2-in. color sensor. Under software control, 1-, 3-, 5-, 12-, and 21-Mpixel resolution can be achieved, based on the number of sensor movements and pixel positions made. The more movements the greater the resolution. They also claim that resolution can be increased through additional subpixel movements of the sensor until the limits of the resolving power of the optics are reached. Its interesting to note that these are industrial cameras for industrial purposes, not photographic instruments used for fine art photography. I do think that there is a difference.

Then there is this interesting analysis from Martin Doppelbauer. He presents an excellent analysis on the topic. The bottom line from his conclusions is "Pixel-shifted sensors do provide an improvement over unshifted sensors but they don't get anywhere near high-resolution sensors".

Overall, I do think that the pixel shifted results will be an improvement over the native base sensor. However, the next size up in both sensor size and resolution will be safe. That is why, I think that the 645D/Z is safe from a super-resolving smaller sensor. Again, in the Camera Store video comparing the Olympus 4:3 to the 645Z looked to be very good, very close - but there was no real critical analysis presented in terms of image quality.

I think that its a worthwhile effort and the results will show a marked improvement, but I don't think that Pentax is going to loose any 645D/Z sales, because sensor shifting is too good. If it was the nirvana, they would not be offering this capability.

I came across this video overview from another camera manufacturer...

Last edited by interested_observer; 02-09-2015 at 09:59 PM.

Similar Threads

Similar Threads

Post #1 by Mistral75

Post #1 by Mistral75